spark on yarn 全套搭建

一、Ubuntu安装

三台,分别为node1、node2、node3

ubuntu最小安装

https://blog.csdn.net/qq_41004932/article/details/124955610

ubuntu设置root初始密码

ubuntu安装好后,root初始密码(默认密码)不知道,需要设置。

1、先用安装时候的用户登录进入系统

2、输入:sudo passwd 按回车

3、输入新密码,重复输入密码,最后提示passwd:password updated sucessfully

此时已完成root密码的设置

4、输入:su root

重置hostname

修改网卡,静态ip

vi /etc/netplan/00-installer-config.yaml

network:

ethernets:

ens33:

dhcp4: true

version: 2

network:

ethernets:

ens33:

dhcp4: no

addresses: [192.168.153.131/24]

gateway4: 192.168.153.2

nameservers:

addresses: [8.8.8.8, 8.8.4.4]

version: 2

# 生效

sudo netplan apply

关闭防火墙

systemctl stop ufw

systemctl disable ufw

systemctl status ufw

二、ssh安装

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQDOi24D5KnKcSPOILCJE+1Ocqnkci3ffSGTbyY9FO4bsOGrnBLXexdhC3GZ/yGvscayQLTo1tI/G8j/7fZD1rAQo+B+UktsJXW4TilNoPGPOaZfs3bRB47ehqnNOPgRe2jwWB3RMX8/hsdDhdogTE9EbU2tP4+WyjgUXQIYINe6FYnPsThhuNiAQXbuJEGeKrTVtLvDOO8poOOH1ihc9yhMPvmaVbUmfi+nihImkCT/W6YQ6Z6rRR1m+/Empr+O6eCDn+yEOjJHEOZmvvWFGCPaprp8lDwNdyibnRdNov/mf38ZIC+ioMPjtPkIe86VP56KqRIhX/oASFhLePoKbAuPkxFnN+VSh8NPyIxOQDj6RRliZts/7Osu4Qbr6lzqO8rp9dMfphPtr6fhTjCY2aCP6C+tWAklnRh+gZbXsXc17p7tGZPcodv7gwHOgOGmpwEmpQPASD6M1MO7zPNGvDSvfxC7S4Ky8cd8PgREvdMH82mi182EG1r7ie+CVyFsoK8= ubuntu@node1

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQDCA6yahy/6p+1YtzWoGjk9VpmeHGcSYNyZ/AJm9Ah6tGU8umbO6z5sxMSk9Egj/DC/hC+aFbHit9f++HP2p3gn59Yi3eljRPdQ6/Lvhz1d6NRpyhZUx2vzd/abFTYt0FKpm9pnW6rZfgo9M0rpGd4AzTIAvrDXg14BoWxM9hD/Cs9bq7LUzMnOpaGBf82QAdfeDPo+QdTY3v41SndNVg8D43gvo39P3OJDtY9OtnYsDquOVRInXcWqAm+0b04fVkNwvenOXEPeQObsRA4R5NPlrL+C9/rwO/XqOH9FaN1uXD8uO5xMu7dWtZcapRtiiQo7ldS0iI47o3+anYEpZaFd6oM/cz+t75d4tsq/BXcmaP3zzLefrl1McoX6R+1WKtmyPDF2TQSwMdyVOdmJM+A5t8HATqR3xgDfJnaJlOtaF3x7kPuv8iOICpD0OwMbLhAYaEESh9hZpRDe3cWV1vwSwWVABywjigfl0DZdgqxoLYhrh0D/a3DOYlVWv2l5oIs= ubuntu@node2

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQCsPpGIQbx++GcVrwyg7MMfjnNH2lZGtojtfX5Rh47+B0obN2mkf82zJswSiK+lpS2ty1uXQ7edboVKb06Ft1ZOm4JP1SkxbqZzfqP1olwTWofJ/64C+7sKVGij+9ePQx3TvaFtwGuwgv9zIXtaj4aGUyc1IN9+jqVqYiPnzcRCfkUFtUxCTWMQvzACgVjVJTOPcCUl9YgvMJNH2/ahAQ47iNtdQi1Zl1jRyY7fwNKPMTTN0Id3bfHHrpZx8bXoCnDl3+KneI7544itTp5W0k6GmDAzvdV/28Q/G7usgXtUI94N17D30ZUtndvZ+GRP3z7q+agq3olkw9H7pJKToMado5uxED95UlvB/w5knOhdPTZ9CSJy2uzKMDbzwd0j2I1X1dfjGWQWyGLYdmUeiZuTrT0p+KMx5l6IkjZq0J/y8DMqTHyuCEPEMNGJEeF5sOYgQGJ1JZe/9TC2nWNg/MrsL/mqfZBhmabXN7mo9VfyQ84TXXBm7K+qhVxrp8VwuuM= ubuntu@node3

这一步是把id_rsa.pub密钥复制过来并且命名为master_rsa.pub

然后将该密钥添加进入authorized_keys文件中

tjuwzq@master:~$ cat master_rsa.pub >> authorized_keys

三、安装java

Ubuntu20.04远程安装Java JDK8

sudo apt-get update

sudo apt-get install openjdk-8-jdk

java -version

装完后不会配置环境变量 只有bin

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

export PATH=$PATH:${JAVA_HOME}/bin

https://blog.csdn.net/qq_36801710/article/details/79306319

四、scala安装

https://www.scala-lang.org/download/

curl -fL https://github.com/coursier/coursier/releases/latest/download/cs-x86_64-pc-linux.gz | gzip -d > cs && chmod +x cs && ./cs setup

cs install scala:2.13.12 && cs install scalac:2.13.12

https://scala-lang.org/download/2.13.12.html

tar -zxvf scala-2.13.12.tgz

mv scala-2.13.12 scala

export SCALA_HOME=/home/ubuntu/scala

export PATH=$PATH:$SCALA_HOME/bin

五、hadoop安装

https://archive.apache.org/dist/hadoop/common/hadoop-3.3.4/

hadoop-3.3.4.tar.gz

tar -zxvf hadoop-3.3.4.tar.gz

mv hadoop-3.3.4 hadoop

export HADOOP_HOME=/home/ubuntu/hadoop

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HAME/sbin

配置

- hadoop-env.sh文件

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

scp -r /home/ubuntu/hadoop/etc node2:/home/ubuntu/hadoop/

scp -r /home/ubuntu/hadoop/etc node3:/home/ubuntu/hadoop/

或者

scp core-site.xml node2:/home/ubuntu/hadoop/etc/hadoop

scp hdfs-site.xml node2:/home/ubuntu/hadoop/etc/hadoop

scp mapred-site.xml node2:/home/ubuntu/hadoop/etc/hadoop

scp yarn-site.xml node2:/home/ubuntu/hadoop/etc/hadoop

scp workers node2:/home/ubuntu/hadoop/etc/hadoop

scp core-site.xml node3:/home/ubuntu/hadoop/etc/hadoop

scp hdfs-site.xml node4:/home/ubuntu/hadoop/etc/hadoop

scp mapred-site.xml node3:/home/ubuntu/hadoop/etc/hadoop

scp yarn-site.xml node3:/home/ubuntu/hadoop/etc/hadoop

scp workers node3:/home/ubuntu/hadoop/etc/hadoop

./bin/hdfs namenode -format

./sbin/start-all.sh

或者一个一个启动

jps

http://node1:9870/

http://node1:9864/

http://node2:9864/

http://node3:9864/

http://node1:8088/ # resource manager web ui

namenode重新格式化

https://blog.csdn.net/hylpeace/article/details/88371411

六、spark安装

spark-3.2.4-bin-hadoop3.2-scala2.13.tgz

tar -zxvf spark-3.2.4-bin-hadoop3.2-scala2.13.tgz

mv spark-3.2.4-bin-hadoop3.2-scala2.13 spark

export SPARK_HOME=/home/ubuntu/spark

export PATH=$PATH:$SPARK_HOME/bin

cp spark-env.sh.template spark-env.sh

spark-env.sh

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

export SCALA_HOME=/home/ubuntu/scala

export HADOOP_HOME=/home/ubuntu/hadoop

export HADOOP_CONF_DIR=/home/ubuntu/hadoop/etc/hadoop

export YARN_CONF_DIR=/home/ubuntu/hadoop/etc/hadoop

export SPARK_CONF_DIR=/home/ubuntu/spark/conf

scp /home/ubuntu/spark/conf/spark-env.sh node2:/home/ubuntu/spark/conf/

hadoop 3.3.4

spark 3.2.4

spark与scala对应关系

https://mvnrepository.com/artifact/org.apache.spark/spark-sql

spark on yarn 模式部署spark,spark集群不用启动,spark-submit任务提交后,由yarn负责资源调度

以standalone要启动spark集群构建master worker worker,然后向其提交任务,spark on yarn 由yarn充当master worker worker 向yarn提交任务

spark集群主要是用于资源管理

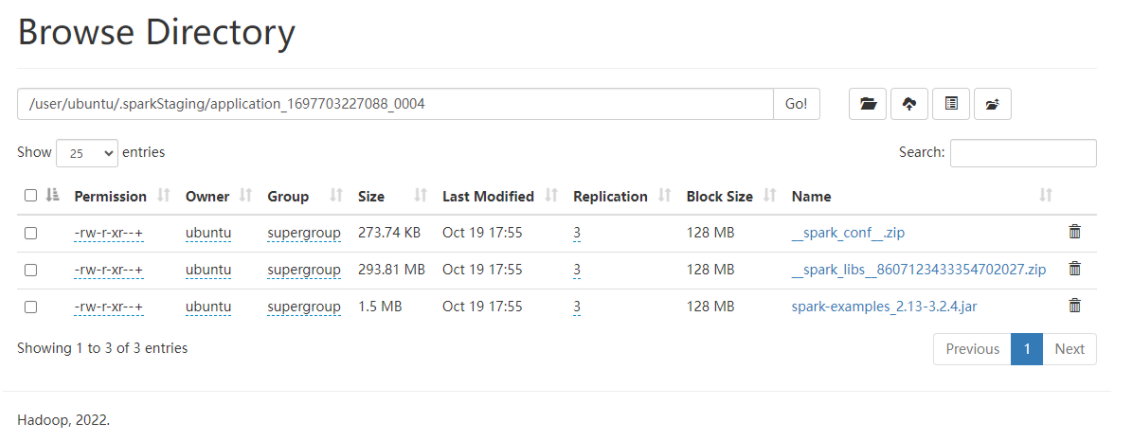

spark-submit --master yarn --deploy-mode cluster --class org.apache.spark.examples.SparkPi --name SparkPi --num-executors 1 --executor-memory 1g --executor-cores 1 /home/ubuntu/spark/examples/jars/spark-examples_2.13-3.2.4.jar 100

hdfs dfs -mkdir -p /spark/sparkhistory

spark-defaults.conf

spark.yarn.historyServer.address node1:18080

spark.history.ui.port 18080

spark.eventLog.enabled true

spark.eventLog.dir hdfs://node1:9000/spark/sparkhistory

spark.eventLog.compress true

scp spark-defaults.conf node2:/home/ubuntu/spark/conf

spark-env.sh中配置SPARK_HISTORY_OPTS并与spark-defaults.conf中对应配置值保持一致

export SPARK_HISTORY_OPTS="-Dspark.history.ui.port=18080 -Dspark.history.retainedApplications=3 -Dspark.history.fs.logDirectory=hdfs://node1:9000/spark/sparkhistory"

/home/liucf/soft/spark-3.1.1/sbin/start-history-server.sh

jps

http://node1:18080/

spark-submit --master yarn --deploy-mode cluster --class org.apache.spark.examples.SparkPi --name SparkPi --num-executors 1 --executor-memory 1g --executor-cores 1 /home/ubuntu/spark/examples/jars/spark-examples_2.13-3.2.4.jar 100

/home/ubuntu/spark/sbin/start-history-server.sh

hadoop fs -getfacl /user

hdfs dfs -setfacl -R -m user:zzz:r-x filepath

上面的命令,就是给名为zzz的账户,对filepath授予读权限。

-R表示是对filepath及其下面的目录与文件递归生效。

注意需要加上x可执行权限,否则不生效。

hdfs授权,drwho

https://blog.csdn.net/weixin_42656458/article/details/113176108